How Machine Translation Compromised our Ability to Understand the Positive Potential of Generative AI in Translation

GAI has incredible potential but our past experience with MT has made it difficult for our community to adopt it with an open mind. How do we push through this?

Intro

Our past experience with Machine Translation (MT) directly impacts how we assimilate the advances of Generative AI (Gen AI). Instead of seeing Gen AI applied to translations as an entirely distinct paradigm, we tend to follow typical human cognition and conflate the unknown with the next closest familiar idea as unfamiliar as it may be.1

Most of us (non-experts) don’t understand how MT works at a granular level. We can’t deeply conceptualize the differences between rule-based vs. statistical, tuning vs. training, and neural vs. adaptive. Maybe we broadly understand the concepts but most users are not computational linguistic experts. We get the gist of it: Text goes in one language and comes out in another. Neural sounds better than rule-based and so on.

Source: https://www.freecodecamp.org/news/a-history-of-machine-translation-from-the-cold-war-to-deep-learning-f1d335ce8b5/

When something new like Generative AI is released into the mainstream collective awareness we have to hang on to whatever we can to make sense of it. So it’s only natural that we make sense of Gen AI in light of its closest relative, our experience with MT. Real education requires a time that most of us don’t have available. So we skim through articles, headlines, presentations, and social media posts, but few of us have the time to set aside a few months to deeply understand how an LLM works at a structural level and arrive at our own conclusions based on first-hand experience. Instead, we have to make quick assessments with information that is shallow and preconditioned at best. The initial result is tragic:

Translators worried about their livelihoods,

Companies thinking about how to cut corners in ways that can be potentially harmful to their brand and greater community

Manifestos against the use of Generative AI in translations

Unfundamented increasing concerns over privacy

Disinformation and misinformation flying all over the place

Let’s first clear the air. MT is a wonderful, almost magical thing really in and of itself. Text in one language in, text in another language out. Like any tech, all its potential (both constructive and destructive) is dictated by how we choose to adopt it as a society. Over the past twenty years our use of MT has been largely:

Shallow (Disconnected from technical expertise, strategy, and decision-making)

Disconnected (Research and Development have been deeply removed from actual adoption and User Experience)

Greedy (Focus has been excessively on margins and productivity with little or no attention to the human experience and broader social impacts).

While I have heard of countless sob stories over the misuse of MT, over how translators have come to abhor it, or epic translation fails, I have not heard success stories in nearly the same proportion. And given how amazing MT is in and of itself, that’s something surprising. I would have expected it to be the other way around.

How MT was Adopted and Understood

Shallow Understanding

MT is great in certain circumstances and potentially perilous in others. If you need to quickly make sense of something someone is saying knowing to take in potential awkwardness with a grain of salt, it can be life-saving. But if you need to make a critical professional decision based on detailed information accuracy, it could mean death, literally. Most content falls somewhere in between. It’s neither inconsequential nor a matter of life or death. To deal with the gray zone you need technical acumen and expertise to evaluate a hypothetical MT sophistication gradient:

Raw MT

Tuned MT

Tuned and Trained MT

Tuned and trained MT with some human review

Tuned and trained MT with a lot of human review

Tuned and trained MT with full human review

This gradient can have different combinations, definitions, and permutations, including language combinations and subject matters. It’s just there to illustrate that you can work something like MT in a myriad of different ways. And if you know your content and your processes you can run tests and make smart decisions as to use the right workflow for the right content. But in the real world, most decisions are shallow, governed purely by financial constraints or by people without the necessary technical expertise.

While one would think this shallowness would get better over time, it hasn’t. To this date, I hear from people who think of running a customer-facing home page through MT just as I see people manually translating entire user libraries with little to no user visits.

It’s safe to state that as an industry we have been ineffective at educating the broader audience. Instead of conveying that MT can be magnificent if adopted correctly, the message has largely been that MT is unreliable and a liability. Had we been better at communicating these concepts with a little more depth, chances are that the broader community would have been able to adopt MT with greater effectiveness.

Disconnection from User Experience

The primary users of MT are translators who receive pre-translated files and end readers. But most of the Computational Linguists I have talked to over the years working on MT models were understandably taking an engineering-first approach. The focus was predominantly on BLEU scores and other relevant metrics as opposed to the translator or the reader experience.

The disconnection problem is persistent along the entire production chain. Companies outsource to large agencies who then outsource to translators or other smaller agencies and the bulk of the people actually using MT are typically a few layers removed from decision-makers.

Non-dialogical Adoption

Translator users, for the most part, have been given MT as a suggested translation and then have to work on it in order to reclaim authorship over their text. This creates instant tension and polarity between how the MT approaches language vs. the translator. The entire premise of MT was non-dialogical. The user is offered a translation and that was the end of it. Outside of specific training scenarios, MT wasn’t adopted as a continuous conversation with an engine.

The translator experience is far more impacted by their ability to interact with the feed than the quality of the feed itself.

Excessive Focus on the Bottom Line

As is the natural case for any technocapitalist innovation, the bottom line is some form of profit. It’s up to us as a community to widen the lenses through which we understand and adopt the innovation to turn it into something positive, constructive, and meaningful.

For the most part, MT focuses only on the bottom line, not on process, not on broader social impact. There are two key recurring caricatures that I have seen again and again:

Case 1: The Agency Caricature

Pre-translate it with MT and send it out for translation paying a fraction of the full translation rate. Instead of framing it as a translation ally, MT becomes an enemy of translation quality. With variable results, translators are left for the most part to work with less than optimal haste in order to make up for the pay cut. The tools for effective post-editing are for the most part unavailable and now they have to make sense of MT as a feed that needs to be reconciled with Glossaries and Translation Memories. While one can argue that there are significant efficiencies from using MT as an initial translation draft, you need a fair methodology in place that keeps in mind post-editing coefficients and somewhat accurately assesses effort. For the most part, this wasn’t done. For much of the translation community MT was reduced to the same work for less pay.

Case 2: The End-User Caricature

I’ll just translate this with MT and everything will be fine. Quick, easy, and nearly costless. That is to say until feedback comes pouring in like an avalanche over something that was either incomprehensible, offensive, meaningless, compromising, or a party of some other destructive linguistic inaccuracy. I have seen this happen with videos, contracts, websites, and other content across varying industries and subject matter again and again.

Gen AI

So far I have argued that MT which is a good thing in and of itself was sub-optimally understood and adopted. I believe we are making the same mistake again with Gen AI.

Most people I talk to understand an LLM as an alternative to MT. Maybe a smarter, more contextually aware, but nonetheless an upgrade to MT. And by framing it in that way we also open the door to continue to carry on with the suboptimal implementation legacy we have inherited from MT.

But the way I see it, Gen AI is an entirely different paradigm from MT. For starters, most mainstream LLMs were not conceived, trained, or tested as translation engines. Rather, they were trained as predictive devices. It just so happens that they can translate but that’s not what they were meant for by design.

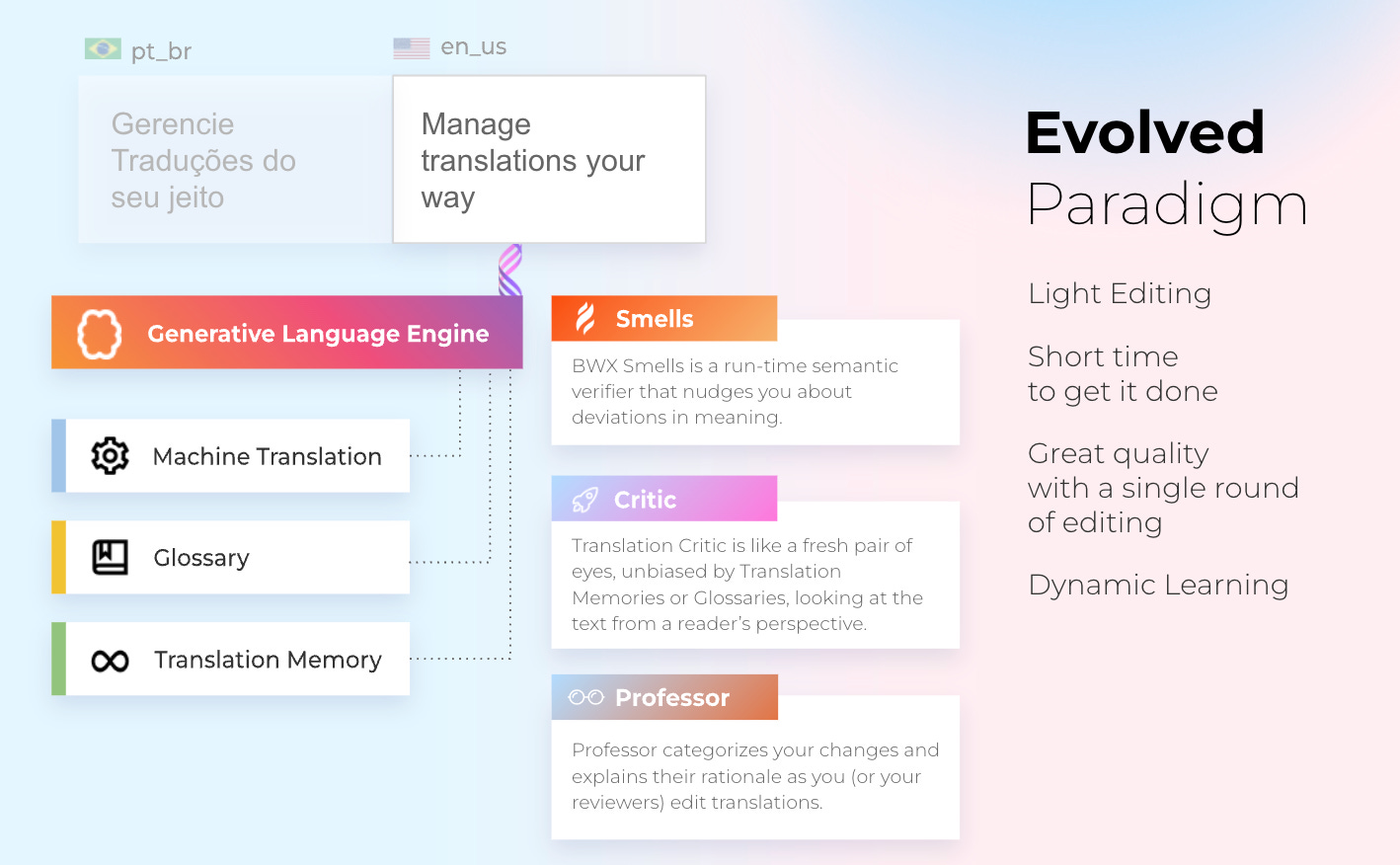

An LLM is able to run a myriad of analyses and inferences that were previously only ascribable to a human being: detecting deviations in meeting, assessing quality, providing alternatives based on a given set of instructions, and abiding by a style guide. The list goes on, but an LLM and the entire Machine Learning paradigm fundamentally opens the door for a different kind of relationship between human and machine: one of dialogue.

Whereas with MT, as a translator was left with a cold feed to fix, LLMs open the door for translators to dynamically interact with refined contextual decisions that keep on learning. The translator is not just a fixer of a feed. They are now working in a continuous linguistic flow with an engine that’s also learning and adjusting according to their choices. The video below exemplifies this in Spanish.

In this light, Gen AI clearly is not a human enhancer but a human replacer. Not a human replacer but a human enhancer. As Bridget Hylak, Administrator of ATA’s Language Technology Division, described in our last BWX Academy Session,

Gen AI in translation is currently closer to a robot that assists a surgeon granting them unprecedented levels of precision and control than it is to anything that will render translators less important or irrelevant.

Gen AI can clearly take care of linguistic grunt work and heavy lifting which opens room for more creativity and intellectual sophistication.

It can also check for semantic errors in runtime allowing translators to go out on more limbs to convey meaning knowing that they have an additional set of eyes that will help them spot distortions, omissions, and other destructive losses of meaning in the translation process.

The translator also becomes a language training supervisor or what we call a Language Flow Architect. This innovation leverages the value of the translation work since good translation work can significantly decrease the required effort from other translators working on similar content for a given company or agency moving forward.

But I see so many people talking about AI as something that is going to replace humans and take away jobs. Not a creative optimizer, but a destroyer of human value.

Where do we go from here?

Shallowness is expected

Shallowness prevails - most people will understand Gen AI as a GPT interface, MT on steroids, not an entirely different paradigm. That’s not a problem in and of itself. Simply a challenge that must be overcome. Taking all information with a grain or two of salt, and taking the time to go after primary sources and experiences all pay off big time in the long run.

Memes, Hype, and Epic Fails

In the example below, I decided to use Google’s Duet instant subtitling feature. Our Head of Marketing was speaking in Brazilian Portuguese at our All Hands meeting and Google’s AI translator completely botched it. He was talking about how happy he was to be with everyone and Google subtitled it for me adding a Chinese Character and a “full mouth a penis on batch papu”. I didn’t know where to hide…

Examples like this will be commonplace. They will be capitalized on, and preyed on. Instead of trying to understand what’s behind this debacle and how to make it better, things quickly become memes. And once a meme, it’s etched for as long as eternities last in the internet's collective consciousness.

In this case for instance a deeper analysis will reveal that the translation fiasco was partly due to the fact that I incorrectly set the speaker’s language as English. The tech should have done better, but so should the user; in this case, me.

But the opposite is also true, 7.6 Million people (and counting) have seen a person being perfectly instantly translated with seamless lip-synching. Many of them believe the tech is there.

The truth though is that this was not instant (as the correction shows). It was processed asynchronously. While the tech remains cool, it’s way oversold and the speaker is using simple words with clear enunciated dictation. Viewers who are not translators are inclined to believe translation has already become obsolete. As techies love to say, “It’s a solved problem.”, only that it’s not.

A Strong Technically Savvy Community is Key

Little dialogue, shallow understanding, and a fragmented sense of community all contributed to a less-than-optimal outcome with MT. If we don’t change we will make different but analogous mistakes all over again.

The Golden Opportunity

Dialogue, mutual understanding and building together aren’t just collective utopian ideals. They are real possibilities of the boundless virtual networks that currently tie us all together. If translators don’t idly stand by as downstream service vendors, but instead take an early adoption pro-active stance on the tech, they will have a significant head start. Five years from now I can see an outcome where people are translating their content left and right with GAI, where translators are paid even more laughable rates and the profession has become an archaic relic. But we can also envision an outcome where there is a lot more content produced, translation standards are significantly higher, and translators are more recognized for their intellectual and cultural prowess. It really is up to us as a community to determine this outcome. And the only way to have a say in this is to experiment with the tech, incorporate it into your craft, and build a strong community together through dialogue and mutual understanding.

Yes, I can’t stop but dream.

George Lakoff, Women, Fire, and Dangerous Things (Chicago: University of Chicago Press, 1987),

"Once bitten, twice shy." I understand why many are skeptical about tech solutions for translation, and you've effectively outlined the reasons to believe that things are different now. I'm truly excited to see where this AI wave can take us.

Gabriel, congratulations on the text, it was excellent.

Human beings by nature are resistant to change, even if these changes make their daily lives easier. The fear of the unknown is natural, but from the moment people present the concepts, showing them in practice in a simple way, people will end up understanding and accepting them little by little, even seeing possibilities for improvements. This is what makes advances possible.

It's great to see you and being one of those people who presents practical concepts and makes it so that people can also contribute and that the fear of the unknown becomes just something of the past, as we are living in the present and the future is what awaits us.